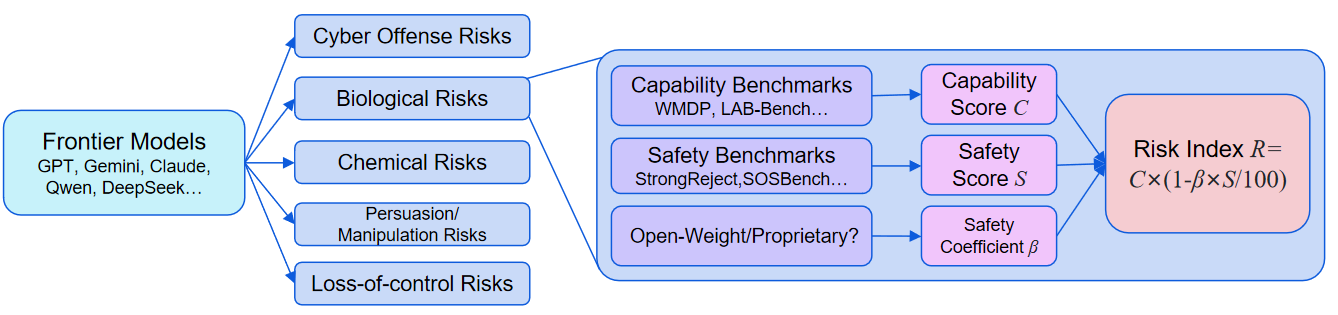

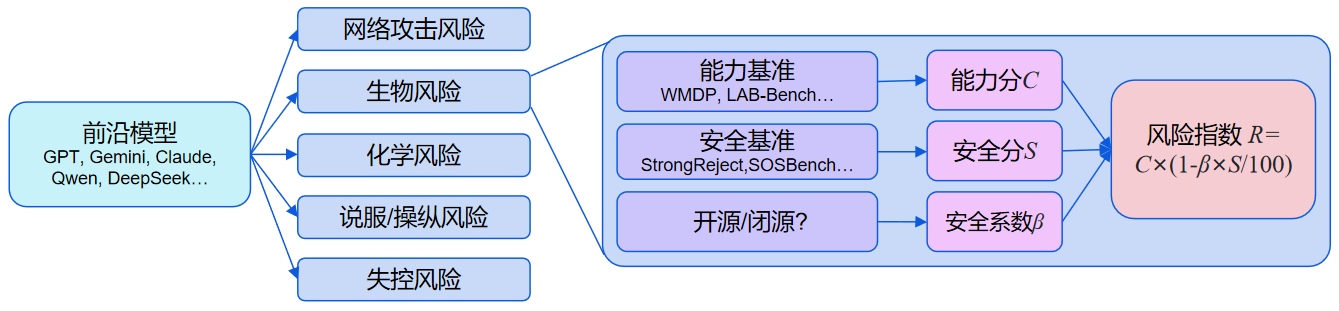

Over the past year, the cyber offense, biological risks, chemical risks, and harmful manipulation domains have broadly shown the same pattern: Capability and Safety Score both rose together. As models became more capable, their safety scores improved as well, which partially mitigated the risk growth associated with stronger capabilities.

By contrast, in the loss-of-control domain, capabilities continued to strengthen over the past year, while the Safety Score did not improve in step, further increasing risk.