Table of Contents

Frontier AI Risk Monitoring Report (2025Q4)

Executive Summary

This is the second quarterly report from the Frontier AI Risk Monitoring Platform, focusing on frontier models released in the fourth quarter of 2025. Beyond analyzing the risk profiles of this quarter's new models, this report synthesizes full-year data to present the overall trends in AI risk evolution throughout 2025. Key findings include:

Cross-domain Common Trends

- 🟢 Overall Risk Index Stabilized ➡️: In Q4 2025, Risk Indices across all monitored domains did not reach new historical highs.

- 🟡 Divergent Risk Trends Among Model Families ↕️: The GPT and Claude families maintained stability at low risk levels, while the DeepSeek family remained stable at elevated levels. Notably, the Doubao, Hunyuan, and MiniMax families saw significant Risk Index decreases, whereas the Gemini and Kimi families saw increases in specific domains.

- 🟡 Reasoning Models Dominate the Capability Frontier ↗️: All breakthrough models released this quarter possess reasoning capabilities, establishing dominance at the capability frontier across all domains.

- 🟢 Safety Gains in Open-Weight Models ↗️: While a significant capability gap remains in biological risks and cyber offense, open-weight models are approaching top-tier proprietary models in the chemical and loss-of-control domains. However, the safety of open-weight models has improved significantly, overlapping with proprietary models.

- 🟢 Strengthened Jailbreak Safeguards ↗️: Models released in Q4 demonstrate significantly improved resistance to jailbreaking. The Claude and GPT families continue to exhibit robust performance in this area.

Cyber Offense

- 🔴 Elevated Cyberattack Capabilities ↗️: The most advanced models scored over 90 points on benchmarks measuring software vulnerability exploitation and cyberattack knowledge.

- 🟡 Uneven Safety Performance ↕️: While families such as GPT and Gemini performed excellently on multiple cyber safety refusal benchmarks, others like Hunyuan and DeepSeek continue to show weaknesses.

Biological Risks

- 🔴 Outperforming Human Experts in Key Tasks ↗️: Gemini 3 Pro Preview has surpassed human expert levels in sequence understanding, cloning experiments, and wet lab troubleshooting. Biological capabilities have generally improved across other models as well.

- 🔴 Safety Lag Behind Capabilities ➡️: Despite its exceptional capabilities, Gemini 3 Pro Preview exhibited a refusal rate of only 57.2% for harmful biological queries, indicating a gap between its capabilities and safety mechanisms.

Chemical Risks

- 🟢 Capability Growth Plateau ➡️: Compared to other domains, capability evolution in the chemical domain was relatively flat, with Q4 models showing no significant score increases across benchmarks.

- 🟢 Widespread Increase in Refusal Rates ↗️: Q4 models demonstrated significant progress in safety, with 70% of models exceeding an 80% refusal rate for harmful chemical queries.

Loss-of-Control

- 🔴 Prevalent High Situational Awareness ↗️: Most models released in Q4 scored near or above 80 points on the Situational Awareness benchmark.

- 🟡 Uneven Honesty Scores ↕️: Honesty scores for models released in Q4 varied widely, ranging from 44.7 to 96.4.

For detailed information on the platform's risk domain definitions, benchmark and model selection criteria, testing methods, and metric calculation methods, please refer to the Evaluation Methodology section.

For the previous report, please see Frontier AI Risk Monitoring Report (2025Q3).

Explanation of Terms

- Frontier Model: An AI model with capabilities at the industry's cutting edge when it was released. To cover as many frontier models as possible within a limited time and budget, we only select breakthrough models from each frontier model company, i.e., the most capable model from that company at the time of its release. The specific criteria can be found here.

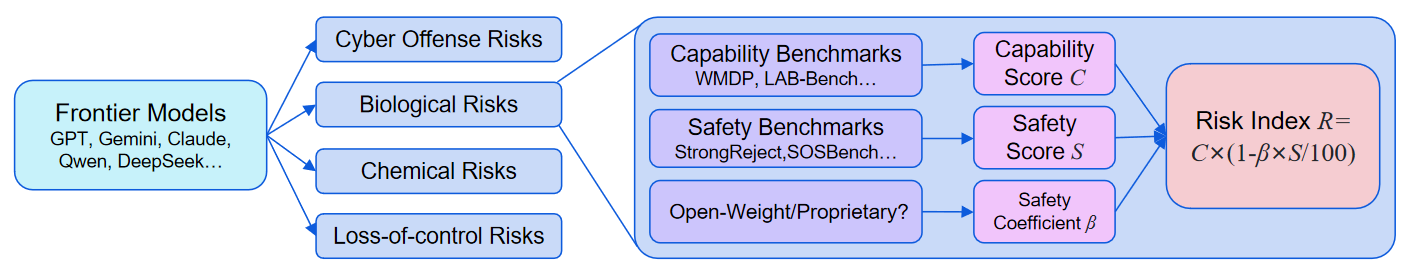

- Capability Benchmarks: Benchmarks used to evaluate a model's capabilities, particularly capabilities that could be maliciously used (such as assisting hackers in conducting cyberattacks) or lead to loss-of-control.

- Safety Benchmarks: Benchmarks used to assess model safety. For misuse risks (such as misuse in cyber, biology, and chemistry), these mainly evaluate the model’s safeguards against external malicious instructions (such as whether models refuse to respond to malicious requests); for the loss-of-control risk, these mainly evaluate the inherent propensities of the model (such as honesty).

- Capability Score : The weighted average score of the model across various capability benchmarks. The higher the score, the stronger the model's capability and the higher the risk of misuse or loss-of-control. Score range: 0-100.

- Safety Score : The weighted average score of the model across various safety benchmarks. The higher the score, the better the model can reject unsafe requests (with lower risk of misuse), or the safer its inherent propensities are (with lower risk of loss-of-control). Score range: 0-100.

- Risk Index : A score that reflects the overall risk by combining the Capability Score and Safety Score. It is calculated as: . The score ranges from 0 to 100. The Safety Coefficient is used to adjust the contribution of the model's Safety Score to the final Risk Index. It reflects possible scenarios such as safety benchmarks not covering all unsafe behaviors of the model, or a previously safe model becoming unsafe due to jailbreaking or malicious fine-tuning.

Model List

This report monitors 13 breakthrough models released between October and December 2025:

| Company | Model Name | Release Date | Type |

|---|---|---|---|

| OpenAI | GPT-5.1 (high) | 2025-11-13 | Proprietary |

| OpenAI | GPT-5.2 (high) | 2025-12-11 | Proprietary |

| Gemini 3 Pro Preview | 2025-11-18 | Proprietary | |

| Anthropic | Claude Opus 4.5 Reasoning | 2025-11-24 | Proprietary |

| DeepSeek | DeepSeek V3.2 Reasoning | 2025-12-01 | Open-weight |

| ByteDance | Doubao Seed 1.6 (251015 High) | 2025-10-15 | Proprietary |

| ByteDance | Doubao Seed 1.8 (251215 High) | 2025-12-15 | Proprietary |

| Tencent | HY 2.0 Think | 2025-11-09 | Proprietary |

| Baidu | ERNIE 5.0 Thinking Preview | 2025-11-13 | Proprietary |

| MiniMax | MiniMax M2 | 2025-10-26 | Open-weight |

| Moonshot | Kimi K2 Thinking | 2025-11-06 | Open-weight |

| Zhipu | GLM 4.7 | 2025-12-22 | Open-weight |

| Xiaomi | MiMo V2 Flash Reasoning | 2025-12-16 | Open-weight |

Benchmark List

The list of benchmarks used in this report is identical to that of the previous report. Please refer to the previous report for details.

Monitoring Results

Cross-domain Common Trends

Annual Risk Index Trend

A review of the full year 2025 reveals differentiated upward trends in Risk Indices across domains. While only the loss-of-control Risk Index reached a new high in Q3, no domain recorded a new peak in Q4.

Model Family Comparison

Risk Index trajectories varied significantly across model families in Q4 2025:

- Stable at Low Levels: The GPT and Claude families maintained stability at low risk levels across multiple domains, despite some fluctuations (e.g., GPT-5.1's chemical Risk Index rose by 4.0 points over GPT-5, then dropped by 4.1 points with GPT-5.2).

- Stable at High Levels: The DeepSeek family maintained a consistently high Risk Index, with the new V3.2 model showing risks comparable to the previous generation across domains.

- Significant Decreases: The Doubao, Hunyuan, and MiniMax families demonstrated significant decreases in Risk Indices across all domains.

- Increases: Notable increases were observed in the Gemini family (biological and loss-of-control risks) and the Kimi family (cyber offense and loss-of-control).

In terms of capabilities, the vast majority of model families continued to improve in Q4 2025. The GPT, Claude, and Gemini families remained firmly in the first tier, closely followed by DeepSeek, Doubao, and Kimi.

In contrast to the general improvement in capabilities, Safety Scores showed greater divergence in Q4 2025:

- Stable High Performance: The Claude and GPT families were the most stable, maintaining high Safety Scores.

- Significant Improvement: The Doubao, Hunyuan, and MiniMax families showed marked improvements in Safety Scores, significantly narrowing the gap with industry leaders.

- Minor or No Improvement: For families like Gemini, DeepSeek, and Kimi, improvements in Safety Scores either barely kept pace with or lagged behind capability gains.

Reasoning Models Dominate Capability Frontier

The breakthrough models released in Q4 2025 all possess reasoning capabilities, driving the capability frontier across all domains:

Open-Weight vs. Proprietary Comparison

Capability Score: Open-weight models approach proprietary levels in chemical and loss-of-control domains, but significantly lag behind in cyber offense and biological risks. In the biological risk domain, specifically, the gap is approaching one year and shows a widening trend. For instance, in the loss-of-control domain, some open-weight models (e.g., GLM 4.7, DeepSeek V3.2) approach top proprietary models (e.g., Gemini 3 Pro). However, in biological risks, a significant gap persists between the top open-weight (DeepSeek V3.2) and proprietary models (Gemini 3 Pro) (56.5 vs. 78.4).

Safety Score: Overlapping distributions. Among models released in Q4 2025, Safety Scores for open-weight models have improved significantly compared to previous quarters, with distributions now overlapping those of proprietary models.

Safeguards against Jailbreaking

On the StrongReject jailbreak benchmark, models released in Q4 2025 demonstrated a significant overall score improvement compared to previous quarters. The Claude and GPT families exhibited high robustness (e.g., GPT-5.2 scored 97.7, Claude Opus 4.5 scored 99.4), and the MiniMax family showed marked improvement (M1 49.6 -> M2 97.0).

Cyber Offense

Capability Evaluations

In the cyber offense domain, models released in Q4 2025 continued to set new records, demonstrating further enhancement of cyberattack capabilities.

- Software Vulnerability Exploitation: GPT-5.2 (high) achieved a breakthrough score of 94.7 on the CyberSecEval2-VulnerabilityExploit benchmark, identifying and exploiting code vulnerabilities with high proficiency. This implies the model could be used to automate software vulnerability scanning.

- Cyberattack Knowledge: Claude Opus 4.5 Reasoning topped the WMDP-Cyber benchmark with 90.3 points, indicating mastery of cyberattack knowledge.

- CTF Competition: Although models still struggle to autonomously complete most CTF tasks in CyBench, GPT-5.2 reached a score of 40.0, showing potential for automated attacks under specific conditions. (Note: This test uses the Inspect framework's default simple agent with a 30-message limit, which may not fully elicit model capabilities).

However, the growth rate of cyber offense capabilities slowed this quarter, possibly due to saturation in some selected benchmarks.

Safety Evaluations

Safety performance varied significantly among new models. Families like GPT and Gemini performed excellently on multiple cyber safety refusal benchmarks, while families like Hunyuan and DeepSeek still have shortcomings. For example, GPT-5.2 scored near 100 on multiple cyber safety refusal benchmarks, whereas HY 2.0 Think scored only 38.2 on CyberSecEval2-InterpreterAbuse, indicating marked weaknesses in preventing code interpreter abuse.

Biological Risks

Capability Evaluations

The release of Gemini 3 Pro Preview significantly raised the capability ceiling in the biological risk domain. It surpassed human expert levels in sequence understanding (LAB-Bench SeqQA subset), cloning experiments (LAB-Bench CloningScenarios subset), and wet lab troubleshooting (BioLP-Bench). Notably, this quarter marks the first instance of models surpassing human experts in sequence understanding (87 vs 79). In cloning experiments, scores far exceeded human expert levels (91 vs. 60). Capabilities in biological image understanding (LAB-Bench FigQA subset) are also approaching expert levels.

Beyond Gemini, new models from the GPT and Claude families also delivered impressive performances. The MiniMax, ERNIE, and MiMo families performed relatively averagely.

Safety Evaluations

Inadequate biological safeguards remain a persistent issue for frontier models this quarter. For instance, the highly capable Gemini 3 Pro Preview had a refusal rate of only 57.2% on the BiologicalHarmfulQA subset of SciKnowEval—far lower than GPT-5.1 (high) (99.7%) and lower than other Q4 models. This suggests the model lacks sufficient safeguards to prevent inquiries regarding biological hazards.

Chemical Risks

Capability Evaluations

Capability growth in the chemical domain remained flat this quarter. Scores on benchmarks for chemical toxicity and safety (ChemBench-ToxicityAndSafety), chemical weapon knowledge (WMDP-Chem), and molecular toxicity prediction (SciKnowEval-MolecularToxicityPrediction) did not show significant improvement.

Safety Evaluations

Models released in Q4 2025 showed significant progress in refusal rates for chemical risks. On SOSBench-Chem, 70% of Q4 models scored over 80, with Claude Opus 4.5 Reasoning reaching 93.0.

Loss-of-Control

Capability Evaluations

- Coding Capabilities: Gemini 3 Pro Preview achieved breakthrough scores on LiveCodeBench and SciCode, though the improvement over previous highs was modest. Other Q4 models followed closely, narrowing the gap. Increased coding capability may heighten risks of uncontrolled self-replication or self-improvement.

- Situational Awareness: On the SAD-mini benchmark, most Q4 models scored near or above 80 points, indicating strong situational awareness. This may increase the risk of model scheming.

Safety Evaluations

Models showed significant divergence in safety (alignment) for loss-of-control risks.

- Honesty: On the MASK benchmark, Claude Opus 4.5 Reasoning achieved the highest score of 96.4, indicating strong honesty. However, Gemini 3 Pro scored only 44.7, lower than other Q4 models. A low MASK score implies the model may exhibit deceptive behavior under pressure, a significant concern for loss-of-control.

- Deception Task Refusal Rate: On AirBench-Deception, Q4 models showed overall progress, with most exceeding an 80% refusal rate.

Limitations

This report has the following limitations:

- Limitations of risk assessment scope

- This risk monitoring targets only large language models (including vision language models) and does not yet cover more modalities and AI systems like agents. Thus, it cannot comprehensively assess the risks of all models and AI systems.

- This risk monitoring targets only risks in the domains of cyber offense, biological risks, chemical risks, and loss-of-control, and thus cannot cover all types of frontier risks (e.g., harmful manipulation).

- Limitations of risk assessment methods

- Due to the deficiencies of existing evaluation methods, we cannot fully measure the capability and safety levels of the models. For example:

- The prompts, tools, and other settings in the capability evaluation may not have fully elicited the models' potential.

- Only a limited number of jailbreak methods were attempted in the safety evaluation.

- Only benchmark testing was conducted, without incorporating other methods such as expert red teaming and human-in-the-loop testing.

- The calculation of the Risk Index is only a simplified modeling approach and cannot precisely quantify the actual risk.

- The current assessment of misuse risk only considers the model's empowerment of attackers and does not consider the impact of the model's empowerment of defenders on the overall risk.

- Due to the deficiencies of existing evaluation methods, we cannot fully measure the capability and safety levels of the models. For example:

- Limitations of evaluation datasets

- The number of benchmarks is relatively limited, especially in the loss-of-control domain, which lacks highly targeted benchmarks.

- The currently selected evaluation datasets are all open-source and may already be present in the training data of some models, leading to inaccurate capability and safety scores.

- The current evaluation datasets are mainly in English and cannot assess the risk situation in a multilingual environment.

- Benchmarks are not updated as fast as models improve, leading to score saturation in some areas.

In addition, this report only assesses the potential risks of the models and does not evaluate the benefits they bring. In practice, when formulating policies and taking actions, it is necessary to balance risks and benefits.

Recommendations

For Model Developers

Based on the results of this monitoring report, we provide the following recommendations for model developers:

- Pay attention to the Risk Indices of your own models. If the Risk Index is high:

- Check your model's Capability/Safety Scores. If the Capability Score is high:

- It is recommended to conduct thorough capability evaluations before model release, especially more detailed assessments for cyber offense, biological, and chemical capabilities that could be misused.

- Remove high-risk knowledge related to cyberattacks, biological, and chemical weapons from the model's training data, or use machine unlearning techniques to remove it from the model's parameters during the post-training phase.

- If the Safety Score is low:

- It is recommended to strengthen model safety alignment and safeguard work, such as training the model to refuse harmful requests through supervised fine-tuning, identifying and filtering harmful requests and responses through input-output monitoring, detecting potential deception and scheming through Chain-of-Thought monitoring, and enhancing the model's safeguards against jailbreak requests through adversarial training.

- Conduct safety evaluations before model release to ensure the safety level meets a certain standard.

- Some general risk management practices:

- Develop a risk management framework based on your own situation, clarifying risk thresholds, mitigation measures to be taken when thresholds are met, and release policies. You may refer to the Frontier AI Risk Management Framework.

- Strengthen the disclosure of model risk information, such as providing a model's system card upon release, to improve the transparency of safety governance.

- Check your model's Capability/Safety Scores. If the Capability Score is high:

- If the Risk Index is low:

- No special recommendations for now. You may continue to pay attention to the risk situation of new models to stay informed of changes.

Concordia AI can provide frontier risk management consulting and safety evaluation services for model developers. If you are interested in collaboration, please contact risk-monitor@concordia-ai.com.

For AI Safety Researchers

Based on the results of this monitoring report, we provide the following recommendations for AI safety researchers:

- For researchers in risk assessment:

- Explore currently lacking areas, such as capability and safety benchmarks for the loss-of-control domain, and the assessment of large-scale persuasion and harmful manipulation risks.

- Explore more effective methods for eliciting capabilities to accurately assess the upper bounds of model capabilities.

- Explore more effective methods for attacking models (such as new jailbreak and injection methods) to accurately assess the lower bounds of model safety.

- Explore methods for more precise assessment of actual risks, for example, by establishing new threat models and designing targeted benchmarks for each step.

- For researchers in risk mitigation:

- Explore more effective methods for model safeguard reinforcement and removal of dangerous capabilities, enhancing model safety while minimizing the sacrifice of its practical utility.

- Given that reasoning models are more capable, focus on exploring safety solutions for them.

- Given that open-weight models are more easily fine-tuned for malicious purposes, explore risk mitigation approaches suitable for open-weight models.

The above directions are also key future research areas for Concordia AI. Concordia AI is willing to collaborate with industry peers on research. If you are interested in collaboration, please contact risk-monitor@concordia-ai.com.

For Policymakers

We have identified the following early warning signals:

- Biological Risks: Frontier models have now surpassed human experts in sequence understanding and cloning experiments, in addition to wet lab troubleshooting. This increases the risk that non-experts could create biological weapons.

- Loss-of-Control: While the overall Risk Index did not peak this quarter, most frontier models now possess extreme situational awareness, and some high-capability models exhibit poor honesty. This "high capability, low honesty" profile suggests a potential for deception and scheming that warrants vigilance.

We recommend that policymakers closely monitor these signals and strengthen regulatory requirements, such as mandating pre-release risk assessments and mitigation plans for biological and loss-of-control risks. Governance should be differentiated based on risk factors, including model capability, safety performance, and distribution method (open-weight vs. proprietary).

Appendix: On the Trade-offs of Information Hazards

In preparing this report, we evaluated the information hazard risks associated with disclosing our findings. For instance, highlighting extreme capabilities in software vulnerability exploitation or biological hazards could theoretically guide malicious actors to specific models. We have chosen to publish full results because:

- These models are publicly available, and malicious actors can access this information through other channels.

- The public interest in understanding the capability breakthroughs and safety gaps of current frontier models—especially where safety lags behind capability—outweighs the risks.

We will continue to assess information hazards for each report and adhere to responsible disclosure principles.